Source: Adobe

In the past month, Investor intuition has become highly attuned to the criticality of semiconductors. These devices underpin artificial intelligence (AI) across thousands of applications from music to missile defence. This was strikingly demonstrated when certain AI-exposed semiconductor names soared.

The strategy’s exposure to semiconductor companies is based on years of research and our understanding of the disruption life cycle. Investing in disruption is a multi-year and multi-decade journey – it’s not just about which companies will benefit, but also when those companies will benefit and critically, the valuations.

Generative AI applications like ChatGPT (for text), DALL-E (for graphics) and others, simply cannot run at scale without the requisite build-out of compute capacity. It therefore makes sense that the semiconductor companies behind this compute will be the first to benefit, and they have been.

Nvidia’s near-record addition of ~US$200bn in market capitalisation in a single day wasn’t without reason – it was the result of a second quarter revenue guide that came in 50% above consensus (US$11bn vs the US$7bn expected by financial analysts) with management commentary that the company is ramping production to meet a “substantial increase in the second-half compared to the first half.” These companies are generating tangible revenue and earnings from AI right now.

Disruption necessitates active management

In the 5 months to 31 May, the portfolio’s average exposure to Semiconductors & Semiconductor Equipment (an industry as defined by the Global Industry Classification Standard (GICS)) was 38.9% for the portfolios under management. The exposure of the MSCI All Countries World Index was much lower at 5.3% – this is the strategy’s benchmark and one which many passive (or index-based) funds use to construct portfolios. (Read more about the May performance here).

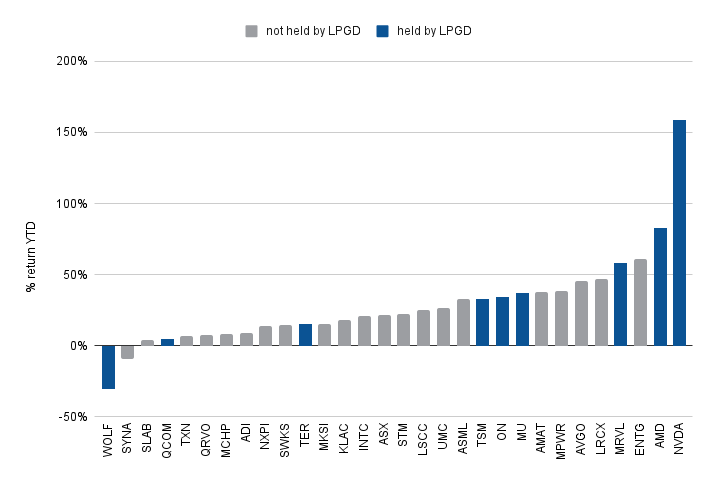

This dynamic can be seen below by looking at the performance of constituents in the Philadelphia Semiconductor index (SOX), a basket of some of the largest semiconductor companies.

Not all semiconductor companies are beneficiaries of AI

Source: Bloomberg – LPGD Holdings as at 31/03/2023

This reveals some of the problems of passive index investment – indices are broad and constructed on the basis of historical success of companies rather than with an eye to the future. As recently as 2022, the SOX’s largest position was CPU maker Intel (INTC). However this reflected that company’s past success and overlooked the significant market share it was ceding to Advanced Micro Devices (AMD). Share prices are catching up to this reality, with AMD significantly outperforming Intel over multiple time frames (6 months, 1 year, 3 year, 5 year).

The same logic applies to gaining exposure to AI.

Understanding why AI benefits some companies

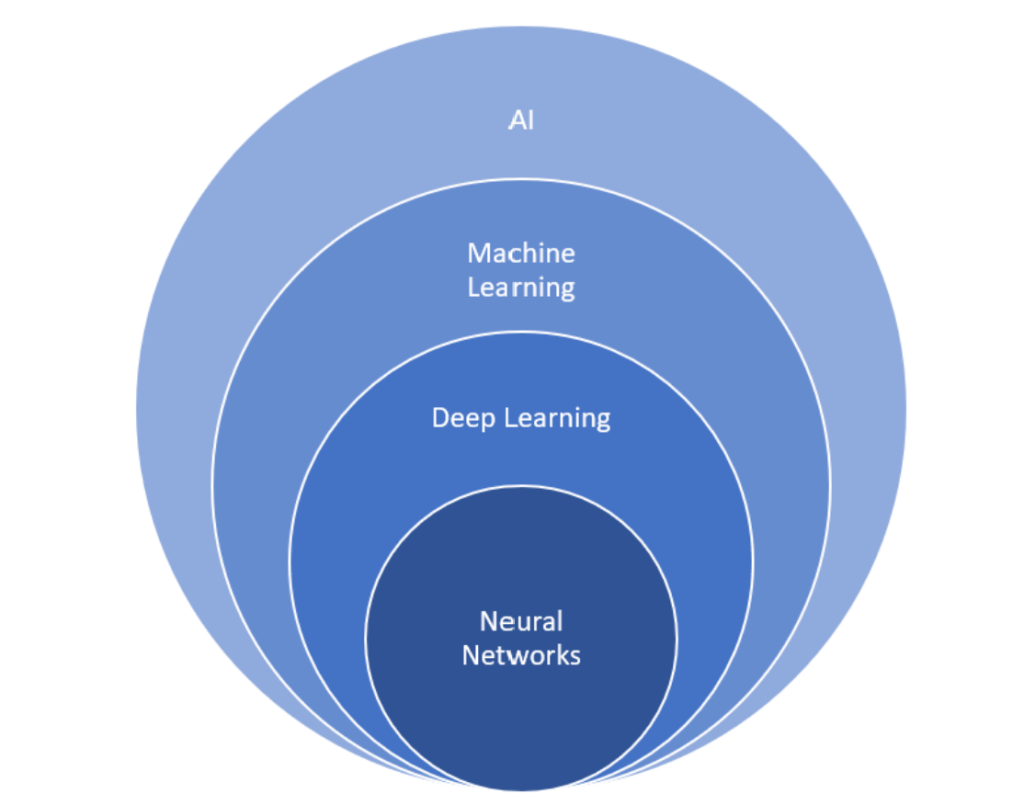

AI is underpinned by various programmatic techniques. When models are structured to process data similarly to the human brain (i.e. like neurons) these are known as neural networks. Any device, from a calculator to a washing machine, might run some level of AI, but very specific hardware is required to develop and run neural network models.

This is a crucial distinction. Practically any company with a digital slant can (rightfully) claim to utilise AI, but only a handful of specialists can leverage neural networks.

Neural networks are a specific subset of AI

Source: Loftus Peak

Large language models (LLMs) are created using neural networks and the headline examples are truly gargantuan – Google’s PaLM-540B was developed for over two months at an estimated cost of tens of millions of dollars on more than 8000 tensor processing units (TPUs), Google’s in-house hardware.

Comparable amounts of hardware are needed to develop models of comparable size like GPT-3 (which powered ChatGPT), Gopher and others. Increasing the parameter count, as is the case for GPT-4, requires a further increase in the scale of hardware input (GPT-4’s parameter count is currently unknown as are the specifics of its development process).

Currently the graphics processing unit (GPU) is the most effective piece of kit for AI development, and the world’s most advanced GPUs are designed by Nvidia. This has pushed the company’s market capitalisation towards the US$1 trillion mark.

However, investing in AI isn’t limited to the GPU.

Loftus Peak’s portfolio approach to AI

The sheer size of AI workloads demands data be transported as quickly as possible – high-speed routers, switches and cables are mandatory, one of the leading suppliers of which is Arista Networks. There also exists any number of additional componentry – sometimes specially tailored to a specific datacentre architecture. This custom silicon is often produced by Marvell Technology. Furthermore, any increase in the overall compute requirements of the world will result in greater demand for CPUs, a domain in which Advanced Micro Devices continues to take market share from incumbent producer, Intel.

We also expect AI to be deployed on edge devices where possible as it ultimately will yield security and latency advantages. The hardware capability to run ChatGPT sized models is currently out of reach – however hardware is capable of image recognition and manipulation. Advanced driver assistance systems in cars (lane keeping, parking assist, onboard infotainment, etc…) are well within the wheelhouse of chip designer Qualcomm.

But it won’t just be semiconductor companies that win.

The next phase of disruption in AI

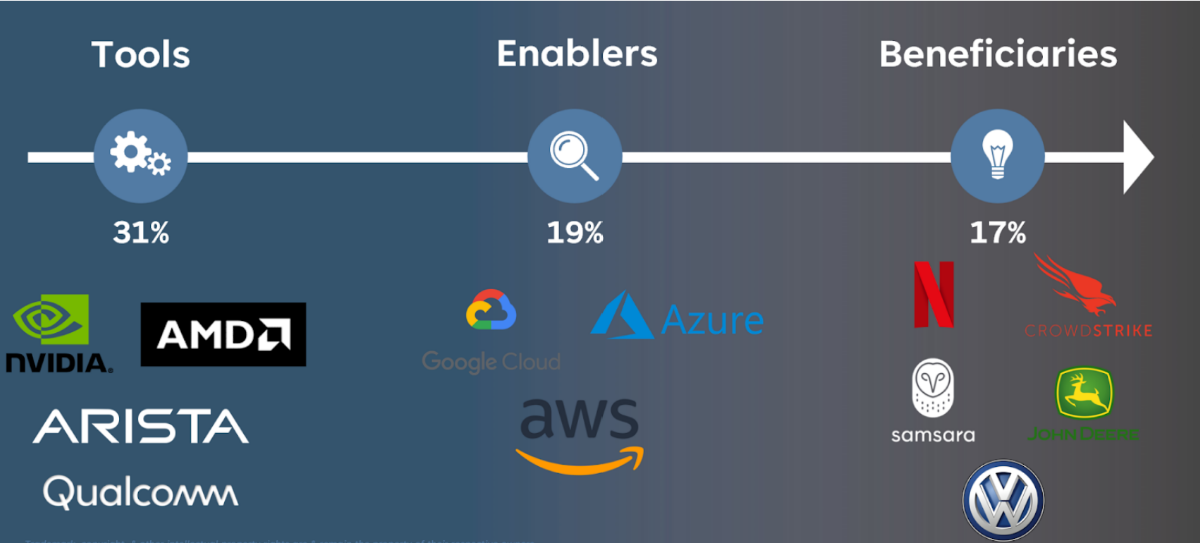

We think of our exposure to disruption in three distinct categories. There are the companies which make the tools, like those listed above. There are the companies enabling AI – the hyperscalers – such as Google, Microsoft and Amazon and there are the AI beneficiaries.

The graphic below gives some clarity on this.

The disruption lifecycle of AI

Source: Loftus Peak

Like the tools, datacentres are a mandatory component of AI. In 2022, the capex of Amazon, the largest datacentre operator in the world, was US$64bn, approximately half of which went towards expanding its cloud business, while Microsoft and Google were very much in the race, spending US$25bn and US$31bn, respectively. These companies have built substantial moats and are well positioned as computationally-heavy AI functionality (including LLMs) grow in demand.

In fact, Microsoft has indicated 1% of Azure’s revenue growth (~US$700m on an annual basis) is already coming from AI services. While it is still early days for Azure and other AI-exposed enablers, revenues are clearly picking up steam.

Further downstream are the beneficiaries of AI. These are the companies that are reaping the rewards of the technological infrastructure laid out by the tool and enabler companies, often in the form of leveraging data to operate more efficiently.

For now, we remain sceptical of companies declaring themselves beneficiaries of generative AI ahead of time, revenue and earnings – after all, even the newspapers thought the internet would benefit them. As we explained here, there are many companies in the portfolio benefiting from the use of discriminative AI today. CrowdStrike provides AI-powered endpoint cybersecurity. Samsara uses AI to sift through its customers’ trillions of data points to optimise their physical operations (particularly in fleet management and equipment monitoring).

In recent decades, Loftus Peak has observed the pace of change accelerating. This has been fuelled by an explosion of data and the insights generated from that data (the growing application of AI). If the speed of advancement in generative AI has taught us anything, it’s that the coming decade will be no different, if not even faster. In such an environment, where companies’ moats are solidified or eroded in what feels like the blink of an eye (or at least faster than passive ETFs can rebalance), investing with a view to the future should be a critical success factor.

Share this Post